In this second post on reinforcement learning (RL), we build on the introduction from part 1 by revisiting the idea of a reward and building up to the idea of discounted returns. Recall that the goal of RL is to maximize the rewards earned by the agent over time. We’re going to discuss three main ideas throughout this article: rewards, cumulative return, and discounted return. I’ll show them mathematically and then discuss their implications to help give you some intuition around what’s going on.

The Reward Signal

A “reward” is a scalar value returned by the environment at each timestep. This can be any real value, from negative infinity (-∞) to positive infinity (+∞). However, it usually helps to have this bounded, as a reward of “infinity” might make the math more difficult later on. A reward can be positive (indicating the agent did something we wanted), negative (a penalty for doing something we didn’t want), or zero (a neutral outcome).

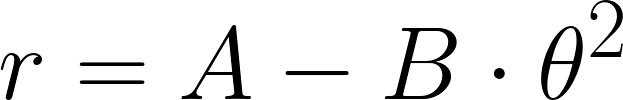

For something like our balance bot, we might reward staying upright while penalizing tilting forward or backward on each timestep. This might look like:

Where r is the reward, A is some constant, B is a coefficient used to alter the importance of the tilt (or pitch), and θ is the pitch (forward or backward) of the robot around the Y axis. In this example, A is just some reward the bot automatically receives each timestep for staying “alive” (not tipping over past some angle). We then penalize the agent by subtracting away some amount proportional to the square of the pitch, which measures how off the robot is tilted from the vertical position. Remember: this reward is calculated at each timestep (e.g. 5 ms).

But what about something like a game of chess, where some moves might not result in an immediate reward? In those cases, perhaps we offer zero reward for intermediate moves and only offer a reward at the very end of the game (reward for winning, penalty for losing). This would force the agent to figure out which moves to make to maximize its total reward (probability of winning games), even if it means sacrificing pieces early on!

The reward for our balance bot is known as a dense reward signal because it is given as feedback at every step. On the other hand, the reward signal for our theoretical chess-playing agent is known as a sparse reward signal, as it is given only at key moments (e.g. at the end of a game). Sparse signals are much harder for agents to learn from, as they must take many steps to receive a reward, store this data, and repeat many episodes before they can start to learn patterns that lead to better rewards.

The reward signal is left entirely up to the system creator, and it’s one of the most important design decisions in any RL project. Designing such functions is often considered an “art,” and usually requires a lot of tinkering to get the agent to perform as intended. RL engineers will spend many hours writing and revising reward functions to produce the intended effects while minimizing side effects.

Return: Total Accumulated Rewards

We can design reward functions however we want. In almost all cases in RL, we quantize continuous time into discrete steps. At each timestep, we receive an observation from the environment, which includes a reward for the previous action we took. How do we measure how well our actions did? How do we decide what to do next?

Our first step in answering these questions is to introduce the concept of the return, which is the total accumulated rewards over an episode. There are two ways to view a return: one is looking backward on how well the agent performed in a finished episode, and the other is the amount of total rewards we expect to get in the future. These perspectives are given different names:

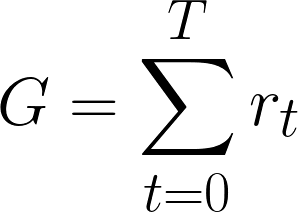

- Episodic return: the total reward collected over a completed episode (for example: our robot attempting to balance then falling over, a single game of chess, etc.). This is a retrospective measurement. We can simply sum the rewards over a given episode to get this return.

- Discounted return: the total reward collected from timestep t onward, with future rewards weighted by a discount factor gamma (γ) so that rewards further in the future are worth less than immediate ones. When looking back on a completed episode, this can be calculated exactly from the rewards that were actually collected. When looking forward during training, it represents a prediction of future rewards the agent has not yet received. We’ll formalize that forward-looking prediction as the expected return in the next post.

Here’s how we would calculate episodic return (we just sum the total rewards over the whole episode):

Where:

- G is the return value

- t is the timestep

- T is the final (terminal) timestep in the episode

- rt is the reward given at that timestep

Remember, agents ideally want to maximize G, not just individual rewards. That means looking back at previous actions to determine what provided the best return. This can be tricky, depending on how the rewards are structured! Like with our chess example, large rewards might not be given for a while, and it could often involve sacrificing short-term rewards (e.g. giving up pieces) for long-term gains (e.g. winning the game).

Now, let’s look at the future return, as it provides the foundation for RL. Our goal is to maximize this value for a given agent, environment, and reward function. Remember, we want our agent to pick the next action that will ideally provide good (or the best) rewards in the future. And this is where the math starts to get tricky. I’ll break this down into bite-sized steps throughout the series so you can hopefully start to wrap your head around what’s going on.

The idea of accumulating rewards seems straightforward enough, but raw episodic returns have a couple of properties that make them difficult to work with directly.

The Problem With Raw Returns

First, continuing tasks (i.e. ones that never end), can result in infinite future rewards, which becomes mathematically difficult or impossible to deal with. Second, rewards received in the future are uncertain, so we can’t determine exactly what episodic returns will look like (until they actually occur).

Future rewards can be uncertain for a few reasons. The first is that the environment does not always respond to the same action in the same way. A few examples:

- In a game of chess, the opponent might make a move the agent did not predict

- In a card game like blackjack or poker, the next card drawn is random

- Robots might encounter uneven terrain, obstacles, or people walking around that cannot be perfectly captured in simulation

This type of randomness is known as stochastic transitions, which means that the path from one state to the next has some element of randomness to it (e.g. a human suddenly appeared in the robot’s path, which was not expected).

The other type of randomness is stochastic rewards. The reward signal itself might have some randomness, even if the transitions are deterministic (i.e. not stochastic). For example, pulling a lever on a slot machine produces random rewards.

The further we look into the future to try to predict rewards, the fuzzier it gets thanks to all these stochastic (random) processes compounding. The good news is that we can still use this information to help train the agent to make decisions that maximize its expected return.

Discounted Future Returns

Would you rather $100 now or 1 year in the future? I imagine that you’d probably want that cash now. With inflation and the possibility to invest the money, that $100 is generally worth more if you take it now. You also don’t know exactly what the future holds; perhaps some emergency comes up where you’d need that money between now and the 1 year mark.

This is where the idea of discounted rewards comes into play. When we start trying to figure out the expected return for an episode, we usually want our agent to prioritize immediate rewards over future rewards. That is, of course, assuming they have the same value! Sometimes, it’s worth holding out for a bigger payout in the future (e.g. losing a chess piece now to win the whole game).

Timesteps

Remember that we’re now looking out into the future. A particular timestep is given by the variable t. So, imagine that we’re standing on a timeline at time t looking out into the future. This might be in the middle of a chess match (say, move #10), and you’re trying to look ahead into the future to figure out what you and your opponent might do. Or, it might be at the very beginning of a balance bot attempting to remain upright (i.e. t=0).

It’s important to take a moment to discuss what a timestep looks like. In a typical RL framework, the basic loop looks like this (leaving out the “learning” aspect for now):

- You take a “step” in the environment by providing an action, the action is performed, and the environment returns a reward and an observation

- You feed the new observation into the agent, which uses it to choose a new action for the next step

- Go to 1

When we talk about future rewards, we are considering it from within step 2 of that process. We assume that we are currently in timestep t, and we have already received the reward and observation at timestep t. That means the agent (in step 2 of the process), must make a decision based on the observation (from t) about the best action for t+1 and beyond.

Calculating Discounted Future Returns

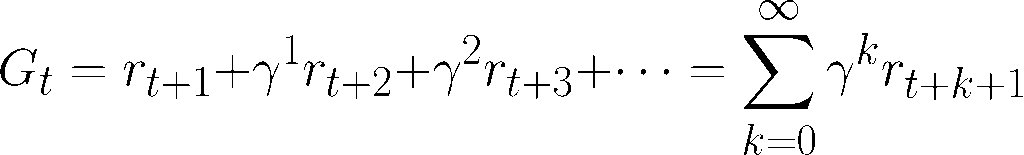

Assuming we know what rewards we will receive in the future (assuming the environment and rewards are deterministic), we would express this future return as follows:

Where:

- Gt is the return from the current timestep t looking out to t=∞

- Rt+k is the reward given by the timestep k steps into the future (from the current timestep t)

- γ is the discount factor, usually between 0 and 1

Note that we raise γ to the power of k. The further away the reward is in the future, it’s worth less to us now (in timestep t). With γ at 0.9, for example, the reward 10 timesteps in the future (where k = 9) is worth about a factor of 0.99 = 0.387 less than if we were to receive it right now.

Another way to think about the discount factor: γ=0 means the agent is “shortsighted” and only cares about immediate rewards whereas γ=1 means the agent is more patient and greatly values future rewards.

Concrete Example

Let’s look at a simple example for our balance bot: every 5 ms, we take an action and receive a reward from the environment. We’ll use our reward function from above, with A=1.0 and B=5.0. The following table shows an example episode over 5 timesteps where the robot starts upright then falls over (not a very good agent!). We’ll assume that the episode stops once the robot passes a pitch of 0.5 radians, and we’ll use a discount factor of γ=0.9. Note that I’ve rounded to 2 decimal places to make it easier to read.

| Timestep (t) | Pitch θ (rad) | Reward (rt) | Gt undiscounted | Gt discounted (γ=0.9) |

| 1 | 0.10 | 0.95 | 1.04 | 1.08 |

| 2 | 0.20 | 0.80 | 0.24 | 0.31 |

| 3 | 0.30 | 0.55 | -0.31 | -0.26 |

| 4 | 0.40 | 0.20 | -0.51 | -0.51 |

| 5 | 0.55 | -0.51 | 0 | 0 |

Remember: at a given timestep, we have already received a reward from the environment, and Gt measures the predicted return looking forward from t+1 onward (not including the reward received at time t). Also, at t=5, the episode has ended, so there is no return value to calculate (as there are no more timesteps beyond t=5).

Let’s walk through the math at time t=2. The pitch is 0.2 radians (the bot is starting to pitch), which means our reward from that timestep is R2 = 1.0 – 5.0⋅0.22 = 0.8. The undiscounted return looks forward in the future, summing the rewards from timesteps t+1 until the end of the episode (timesteps 3, 4, and 5 for this example). Here are the rewards for t at 3, 4, and 5.

R3 = 1.0 – 5.0⋅0.32 = 0.55

R4 = 1.0 – 5.0⋅0.42 = 0.2

R5 = 1.0 – 5.0⋅0.552 = -0.5125

So, the undiscounted (effectively γ=1) return at t=2 is given by adding the future rewards for t at 3, 4, and 5:

G2 = 0.55 + 0.2 – 0.5125 = 0.2375

And the discounted (γ=0.9) return at t=2 is given by:

G2 = 0.90⋅(0.55) + 0.91⋅(0.2) + 0.92⋅(-0.5125) = 0.314875

By discounting, we are saying that rewards in the future are not as important as the ones right now. That includes penalties, which is why our discounted return is higher than the undiscounted version.

It’s important to note that we have a major assumption here: our Gt measurements are perfect predictors of the future. In reality, that’s almost never true. The environment changes, random processes act on our robot, small electrical differences affect how our motors respond, which makes the whole environment difficult to model exactly to be fully deterministic.

In the next post, we will introduce the concept of expected values. If you’ve worked with statistics, then this should be a familiar concept. It assumes some level of variability in the system, and we’ll use that to create a better measurement of predicted returns, which we’ll call the expected return.

Building an Effective Reward Structure

Let’s take a step back and talk about a few high-level training techniques RL engineers have at their disposal. I want to mix this in with the math-heavy discussions so you can start to see how designers work with the math and various programming techniques to produce useful and robust agents.

Agents will often find loopholes or converge on a local maximum that “solve” the problem in unintended ways. In 2016, OpenAI wrote a fun article detailing these pitfalls as they experimented with training RL agents to play video games. In the boat racing game CoastRunners, the objective is to drive a speedboat around a course, hitting blue targets along the way to collect points. Rather than complete the course as intended, the OpenAI agent simply drove in circles, collecting the blue targets that periodically respawned.

From a personal example, when I tried to use RL to play QWOP, the agent learned to simply have the character scoot along in a kneeling position. It technically worked, as the goal of “go far” was met. However, it never really figured out how to walk or run properly, thus missing out on beating the game in a timely fashion (and earning the most points).

From a philosophical perspective, a truly powerful AI given the objective to “make as many paperclips as possible” might realize that killing humans, consuming all the Earth’s resources, and prioritizing its own existence would help maximize that objective. This thought experiment highlights how a misaligned objective, even a seemingly harmless one, can lead to catastrophic unintended consequences.

The moral of these stories is simple: think carefully about how you craft your rewards! Let’s take a moment to introduce a couple of strategies that RL engineers commonly use to help agents learn to take intended (and hopefully morally justifiable) learning routes.

- Reward shaping: adding intermediate rewards to the environment to help guide the agent. For example, you might give small rewards for agents that capture pieces in a game of chess in addition to offering a big reward for winning. However, this can backfire: an agent rewarded too heavily for capturing pieces might focus too much on the short-term gain rather than winning the overall game.

- Intrinsic motivation: also known as “curiosity-driven exploration,” we give the agent small rewards for encountering states it hasn’t seen before, which encourages exploration. This can help when the external reward signal is sparse or delayed in complex environments where the agent is not likely to accidentally stumble onto a meaningful reward.

- Curriculum learning: the practice of training an agent in a simplified version of the problem and gradually increasing the difficulty as it improves. With a balance bot, you might initially train on having it stay upright (which might mean driving around to achieve this goal), then add in the challenge of staying in one place.

- Imitation learning: rather than trial-and-error learning (where the agent starts by trying random actions and learning from the rewards), rewards are tied to mimicking a human-provided solution, such as playing a video game or having a quadruped robot match the gait of a real dog. Often, this gives the agent a head start and the ability to improve beyond the original solution, such as this agent beating the QWOP world record.

- Inverse reinforcement learning (IRL): a close relative of imitation learning. Instead of copying the expert’s behavior directly, it tries to infer a reward function consistent with the expert’s behavior, and then trains the agent on that recovered reward. It’s a powerful technique but often much more complex to implement than direct imitation.

Conclusion

Thus far, we’ve covered a broad overview of RL, reward functions, and returns. We also discussed the importance of adding a discount factor to return calculations. However, we have been operating under the assumption that we have perfect, omniscient insight into the future and can accurately predict future rewards. In the next post, we will introduce some uncertainty into the equation to compute expected returns through value functions.

If you have any questions, something didn’t land clearly, or I missed something, please let me know in the comments!